By now, many website owners understand that AI visibility is becoming more important.

They know that pages are no longer competing only for rankings in traditional search. They are also competing to be understood, summarized, trusted, and cited inside AI-powered experiences like ChatGPT, Perplexity, and Google AI Overviews.

In our previous analysis, we shared a broad pattern we saw across hundreds of pages: many pages already have a workable SEO and structural foundation, but they are not always fully ready for AI-powered discovery.

The next question is more practical:

What are the specific gaps that keep showing up across website pages?

That is what we looked at next.

And one pattern was especially clear:

The same few AI Search Readiness gaps appear again and again.

They are not always dramatic technical failures. More often, they are missing layers of clarity, trust, and support that make it harder for AI systems to confidently use the page in answers.

The most common gaps are not random

One of the most useful things about reviewing pages at scale is that repeated patterns become easier to see.

When the same issues show up page after page, they stop looking like isolated quirks and start looking like real visibility patterns.

Across the pages we reviewed, the most common gaps clustered around three broad areas:

- Answer readiness

- Trust reinforcement

- Supporting context

That matters because it suggests many pages are not failing in a completely chaotic way.

They are often missing a similar set of layers.

And when the same layers keep showing up as weak or missing, that gives website owners a much clearer place to start.

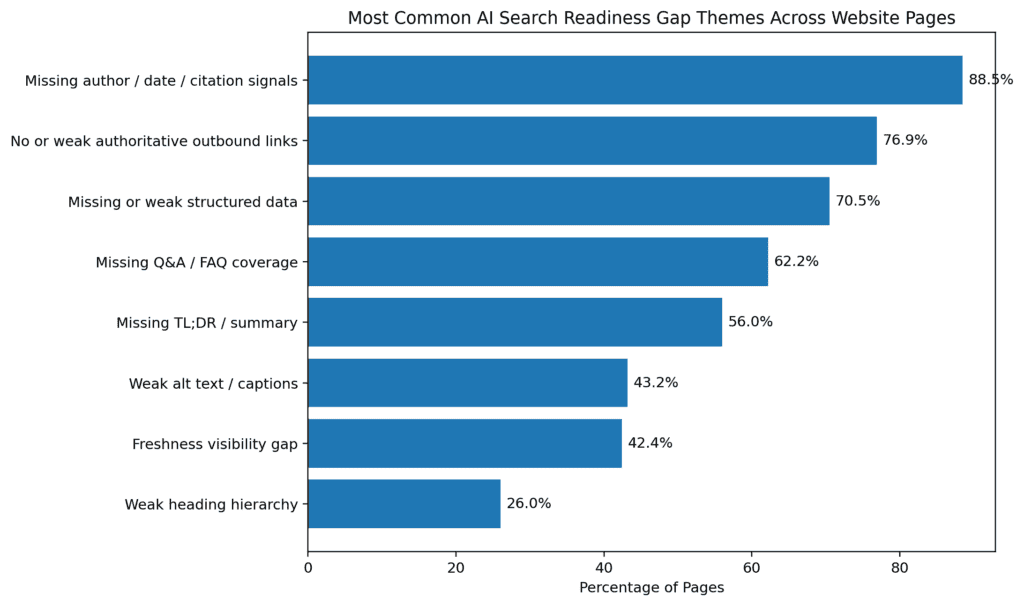

Most common AI Search Readiness gap themes across website pages

| Gap Theme | % of Pages |

| Missing author / date / citation signals | 88.5% |

| No or weak authoritative outbound links | 76.9% |

| Missing or weak structured data | 70.5% |

| Missing Q&A / FAQ coverage | 62.2% |

| Missing TL;DR / summary | 56.0% |

| Weak alt text / captions | 43.2% |

| Freshness visibility gap | 42.4% |

| Weak heading hierarchy | 26.0% |

Gap Clusters: How We Grouped the Most Common AI Search Readiness Gaps

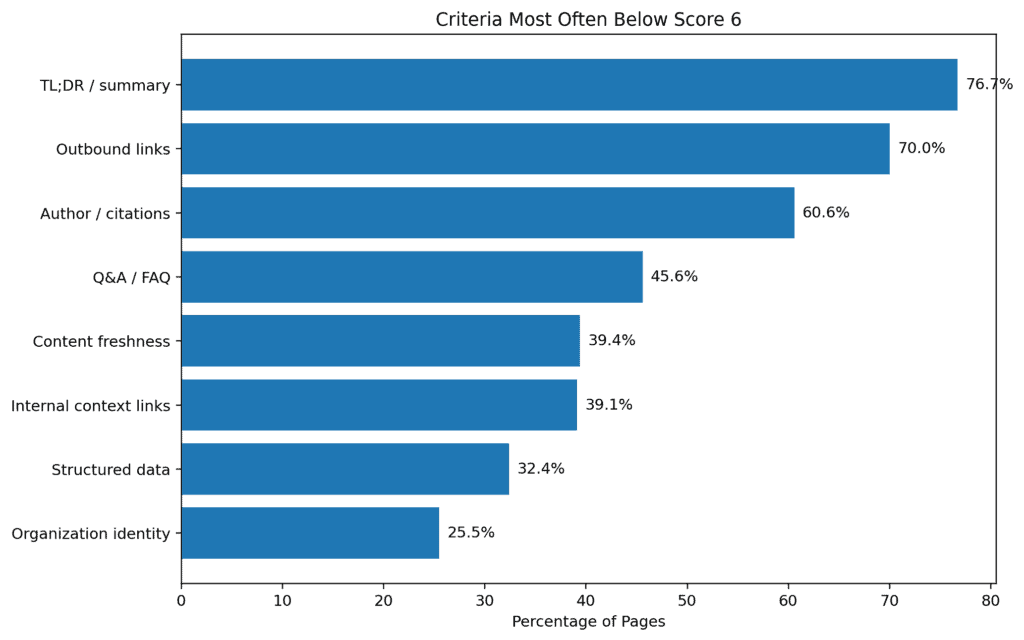

To better understand which AI Search Readiness issues appear most often, we looked across completed page evaluations in Purple Leaf’s AI Search Readiness framework. Each page is evaluated across multiple criteria, and each criterion is scored on a 0–10 scale based on how well the page supports AI understanding, extraction, trust, and citation. Those criterion-level findings are then rolled up into an overall page score, a high-level summary, and a set of strengths and weaknesses.

The table below shows the share of pages where selected AI Search Readiness criteria scored below 6 out of 10. This threshold is useful because it highlights which signals most often need attention across the dataset.

Criteria most often below score 6

| Criterion | % of Pages Below 6 |

| TL;DR / summary | 76.7% |

| Outbound links | 70.0% |

| Author / citations | 60.6% |

| Q&A / FAQ | 45.6% |

| Content freshness | 39.4% |

| Internal context links | 39.1% |

| Structured data | 32.4% |

| Organization identity | 25.5% |

Gap cluster 1: Answer readiness is often weaker than it should be

One of the clearest recurring patterns was that many pages do not make answers easy to extract.

This showed up in several ways:

- No concise TL;DR-style summary near the top

- No visible Q&A or FAQ-style content

- Important answers buried too deep in the page

- Limited snippet-friendly formatting in some sections

This gap matters because AI systems are often looking for content they can reuse quickly and confidently.

A page may contain the right information, but if the answer is buried inside long blocks of copy, spread across multiple sections, or never stated directly, it becomes harder to summarize cleanly.

That is one reason why summary and Q&A-related signals matter so much.

They do not just help human users scan a page more easily. They also help AI systems identify:

- What the page is actually saying

- What question it helps answer

- Which parts are most reusable in an answer

When answer readiness is weak, the page becomes harder to use even if the content itself is not wrong.

Share of pages below score 6 (out of 10) in key answer-readiness areas

| Criterion | % of Pages Below 6 (out of 10) |

| TL;DR / summary | 76.7% |

| Q&A / FAQ | 45.6% |

Gap cluster 2: Trust reinforcement is often thin or missing

Another recurring pattern was the lack of visible support around credibility.

This showed up through issues like:

- weak or missing author/date/citation signals

- limited authoritative outbound support

- little visible reinforcement for claims

- generic trust presentation rather than strong support

This is important because relevance alone is not always enough.

AI systems may encounter content that is topically relevant, but still lack the surrounding signals that help reinforce why it should be trusted.

That does not mean every page needs academic citations or a formal author block.

But it does mean that many pages would be stronger if they did more to support their claims and make credibility easier to interpret.

For example:

- if a page makes technical or performance claims, does it support them?

- if a page is meant to represent the business, does it clearly show who is behind it?

- if freshness matters, is there a visible signal of recency?

- if expertise matters, is there anything on the page that reinforces it?

These kinds of support signals are often weaker than site owners realize.

And because they are less obvious than metadata or schema, they are often overlooked until a page is reviewed more deliberately.

Share of pages below score 6 in key trust-reinforcement areas

| Criterion | % of Pages Below 6 |

| Outbound links | 70.0% |

| Author / citations | 60.6% |

Gap cluster 3: Supporting context is often incomplete

A third recurring pattern was weaker supporting context around the content.

This included issues like:

- Weak or inconsistent alt text

- Shallow contextual internal support

- Limited supporting detail around key content blocks

- Pages that contain useful information, but do not reinforce it well enough

This category is easy to underestimate because these issues often feel secondary.

But supporting context matters.

A page becomes easier to understand when the surrounding signals help reinforce what the page is about and how its parts connect.

For example:

- Strong alt text helps clarify the role of important images

- Contextual internal links help reinforce topic relationships

- Stronger section framing helps clarify why content matters

- Supporting structure helps reduce ambiguity

These are not always the first things site owners think about when they think about visibility.

But they are part of what helps a page feel more complete and more usable in AI-powered contexts.

Share of pages with zero scores in key gap areas

| Criterion | % of Pages with Score 0 |

| TL;DR / summary | 51.5% |

| Outbound links | 40.5% |

| Q&A / FAQ | 40.5% |

| Author / citations | 33.0% |

| Structured data | 25.5% |

What these gaps have in common

The recurring gaps we saw were different on the surface, but they shared an underlying theme:

they make content harder to summarize, support, and trust.

That is the key point.

Many of these pages were not unusable.

They were just missing the extra layers that make a page easier for AI systems to work with.

That is why these gaps matter so much.

A missing summary, weak FAQ coverage, or limited trust reinforcement may not look dramatic on its own. But together, these issues can make a page much less reusable in AI-generated answers.

This is also why site owners sometimes feel confused.

They look at a page and think:

- The content is there

- The page is live

- The page looks fine

- The page even ranks

And yet the page still does not seem to appear where they expected in AI-powered discovery.

Often, the reason is not total failure.

It is incompleteness.

Why these gaps matter even on otherwise decent pages

One of the most important lessons in this data is that these gaps often appear on pages that are otherwise fairly decent.

That is what makes them so important.

If the problem were only broken pages, the answer would be obvious.

But many pages with recurring AI Search Readiness gaps are not obviously broken. They may already have decent metadata, clear headings, some structured data, and a reasonable overall structure.

That is what makes these recurring gaps worth paying attention to.

They are often the difference between a page that is merely present and a page that is easier for AI systems to summarize, trust, and cite.

The practical takeaway

The biggest AI Search Readiness gaps are not always flashy technical problems.

More often, they are recurring missing layers:

- missing summaries

- missing Q&A coverage

- missing support for trust and credibility

- missing context around content and page meaning

That is good news in one sense.

It means many sites do not need a full rebuild.

They need a clearer view of the gaps that are quietly reducing their visibility.

That is a much more practical problem to solve.

Want to see which gaps are affecting your pages?

Purple Leaf helps identify the pages and signals that may be reducing your visibility in AI-powered search experiences.

Scan your website to see where your pages are missing important AI Search Readiness layers — and what to fix first.